The Algorithm Aversion Paradox

Why we hate AI errors (until we don't)

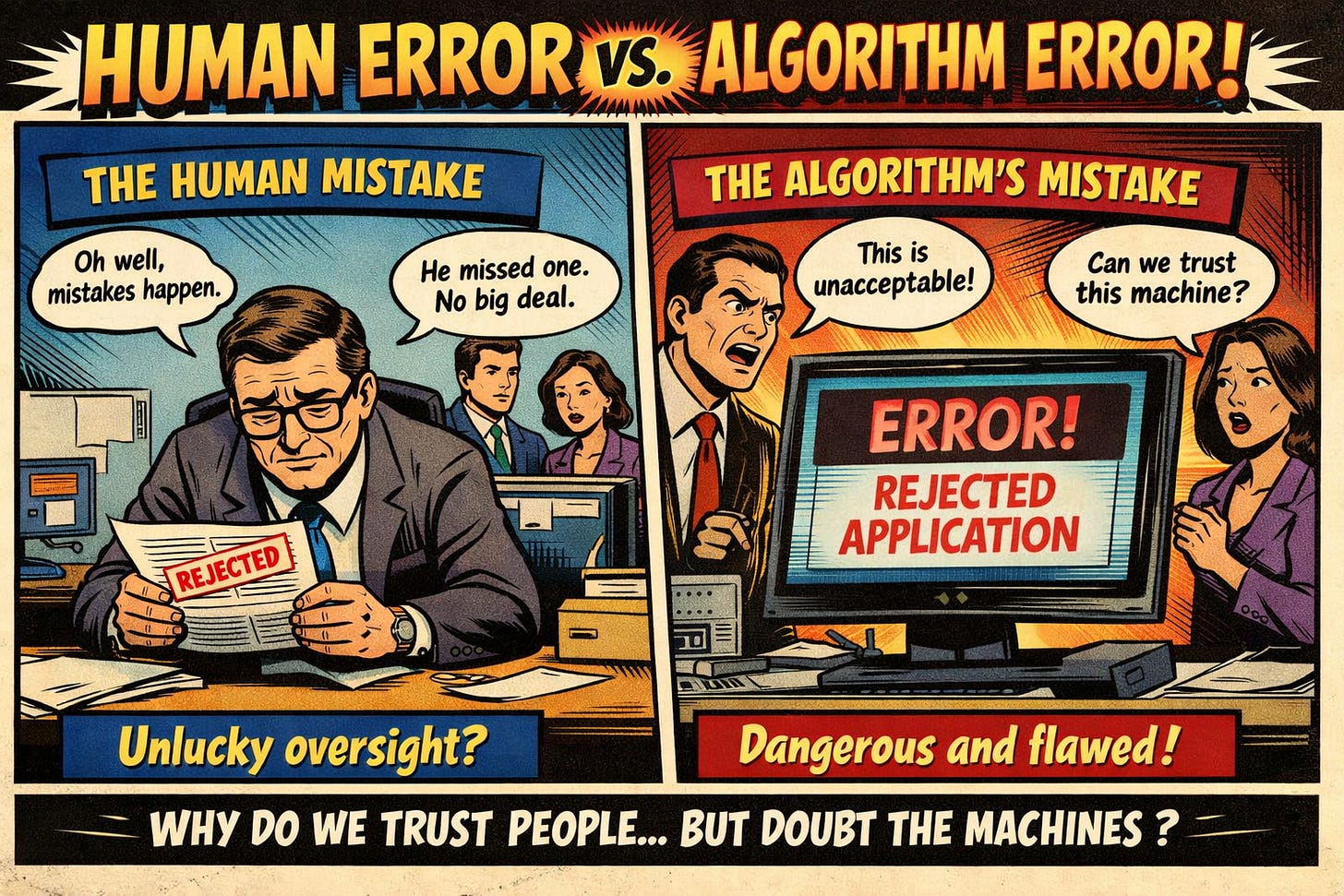

There’s a curious pattern in how people judge mistakes. When a human makes a mistake (e.g., an admissions officer overlooks a failing student’s application), we might shrug it off as an unfortunate oversight. However, when it’s an algorithm making the exact same error, suddenly it’s evidence that machines can’t be trusted with important decisions.

This double standard — judging algorithmic errors more harshly than identical human mistakes — has been well documented for decades. Paul Meehl highlighted a version of this as early as 1954, when clinicians resisted evidence that simple statistical models could outperform trained experts. Researchers call it algorithm aversion, and it’s been treated as a fundamental barrier to AI adoption. New research from Tariq and colleagues the University of Waterloo suggests we may be thinking about this problem the wrong way. The issue may not be algorithms at all, but the discomfort that comes with change.

What the researchers found

Hamza Tariq and his colleagues ran two studies with nearly 1,200 participants, building on decades of research into algorithm aversion. The phenomenon was first properly documented by Dietvorst and colleagues (2015), who found that people became much less likely to use algorithms after seeing them make mistakes, even when those algorithms still outperformed humans overall. Since then, researchers have proposed various explanations: people expect algorithms to be perfect, they can’t learn from experience like humans can, they’re black boxes we can’t understand, or they feel dehumanising for important decisions.

Tariq’s team tested a different hypothesis. They presented scenarios where either a human or an algorithm made mistakes: missing failing students in college admissions, or overlooking defective speakers in quality control. The error rate was identical — both decision-makers failed to detect roughly 40–50% of the known problem cases.

Before showing participants the errors, researchers told them which method was “conventional” — either the human or the algorithm had been used for 10 years, was adopted by 85% of similar organisations, and was integral to the system.

When humans were described as the conventional choice, participants judged algorithmic errors significantly more severely. In the admissions scenario, the algorithm’s mistakes rated 5.01 out of 6 for seriousness, whilst the human’s identical errors rated just 4.23. More tellingly, 55% of participants recommended sticking with the human for future use, compared to only 11% who’d keep the algorithm.

However, when researchers flipped the script and described the algorithm as conventional, the bias was substantially reduced. Algorithmic errors were still judged slightly more harshly, but the gap reduced dramatically. In addition to this, when asked what system should be used going forward, participants split evenly between human and algorithm. In fact, in the quality control scenario, they actually preferred keeping the algorithm (31% vs 7%).

The researchers called this “alternate aversion”; we’re suspicious of whichever option isn’t the status quo, whether that’s an algorithm or a human. This connects to well-established research on status quo bias, first documented by Samuelson and Zeckhauser (1988), and related phenomena like omission bias, where people judge errors from inaction more leniently than errors from action. When we stick with the conventional option and it errs, that feels like an error of inaction. When we choose the alternative and it errs, that feels like an error of action — and we judge ourselves more harshly for it.

Implications for product development

If you’re building products with AI features, this research reframes a fundamental challenge. The problem might not be convincing users that your algorithm is better than a human. It’s convincing them that it’s normal.

Consider how you typically introduce AI features. “Try our new AI-powered search.” “Experimental AI assistant.” “Beta: let AI help with your writing.” Every one of these framings signals that the AI is an alternative, an experiment, something outside the conventional flow. And according to this research, that’s exactly how to ensure users judge its mistakes more harshly.

The researchers manipulated three characteristics to establish conventionality: historic use, prevalence, and system dependence. This approach draws on established findings in choice architecture, particularly Johnson and Goldstein’s (2003) work showing how default options shape decisions, and research on the endowment effect, where we value things more once we perceive them as ours. When participants believed an approach had been used for years, was widely adopted, and was integral to operations, they were more forgiving of its errors. This held true whether the conventional option was human or algorithmic.

What’s particularly interesting is that the conventionality effect was strongest on behavioural intentions rather than severity judgements. People still rated algorithmic errors as somewhat worse even when algorithms were conventional but they chose to keep using them anyway. This suggests a gap between how users feel about AI errors and what they actually do about them. Vocal criticism doesn’t necessarily predict abandonment.

The research also revealed several subtleties worth noting. Detection errors, where something should have been caught, were judged more harshly than prediction errors involving inherent uncertainty. When your AI is forecasting customer churn or recommending content, users seem to understand that perfect accuracy is impossible. When it’s meant to catch quality issues or security threats, however, mistakes feel more avoidable.

Human outcomes also matter. The admissions scenario involved decisions affecting people’s lives, whilst the quality control scenario involved inanimate objects. Algorithmic errors received less tolerance in the human-stakes context. This aligns with research by Castelo and colleagues (2019) showing task-dependent algorithm aversion, and work by Bigman and Gray (2018) demonstrating that people are particularly averse to machines making moral decisions. For these applications, establishing conventionality might not be enough to overcome ethical concerns.

There’s also the question of what counts as “conventional” in practice. The researchers used clear, explicit framings — telling participants directly that an approach had been used for 10 years by 85% of the industry. In real products, conventionality is rarely so cleanly established. Users might encounter AI features without any context about their maturity or adoption. Or worse, they might have context that signals the opposite: beta badges, disclaimer text, opt-in switches that suggest the “real” way to do things is still human-powered.

The broader context

This research sits within a larger shift in how people interact with algorithms. Whilst algorithm aversion has been documented since Meehl’s 1954 work recent studies have also identified algorithm appreciation, where people actively prefer algorithmic advice in certain contexts. Logg and colleagues (2019) found that people often exhibit “algorithm appreciation” when algorithms provide advice, particularly for objective tasks.

We’re seeing this play out in the real world. Despite ongoing debates about AI ethics and accountability, algorithmic systems have become conventional in many domains. Research by Grove and Meehl (1996) showed that algorithms outperform humans in forecasting and decision-making tasks, yet adoption has been slow. Now, decades later, we rely on them for navigation, content recommendations, fraud detection, and increasingly for creative work. As that reliance grows, our tolerance for their errors seems to be growing too.

The question for product builders is how to manage the transition period, when your AI is capable but not yet expected. This research suggests that framing matters enormously during this window. Position AI as experimental and users will judge its mistakes accordingly. Position it as fundamental and users become more forgiving.

This doesn’t mean dismissing legitimate concerns about AI systems. Questions about accountability, transparency, fairness, and explainability remain crucial, particularly for high-stakes decisions affecting people’s lives. It does, however, suggest that some of what we’ve labelled as algorithm aversion might actually be discomfort with change—a familiar human response to unfamiliar ways of doing things.

As Douglas Adams put it:

a) Everything that’s already in the world when you’re born is just normal;

b) anything that gets invented between then and before you turn 30 is incredibly exciting and with any luck you can make a career out of it;

c) anything that gets invented after you’re 30 is the end of civilisation as we know it until it’s been around for about 10 years when it gradually turns out to be alright really.

— Douglas Adams, A Hitchhiker’s Guide to the Internet

Really insightful piece on reframing algorithm aversion as status quo bias. The finding that conventionality framing closes the error tolerance gap is huge for product strategy. I've seen this play out in a few deployments where the "beta" label killed adoption even though the AI outperformed existing systems. The Douglas Adams quote nails the generational aspect too, tho I think the 10-year normalization window is shrinking fast with how quick AI is iterating.

Appreciate this reframe. Alongside history/prevalence, it also makes me think about the day-to-day mechanics: when there’s a clear undo/override and a clear way to get it checked, users may be more willing to stick with the system after it makes a mistake.